Defining Machine Learning, Deep Learning & Neural Networks

Part 2 of our AI Security Blog Series

In our previous blog post“What is Artificial Intelligence, Anyway,”we broke down what the term “artificial intelligence” (AI) actually means and provided examples of the types of AI: reactive, limited learning, theory of mind and self-aware. Reactive and limited learning AI exists today, while theory of mind and self-aware AI are still the stuff of science fiction.

Now let’s take a closer look at defining some terms that need more clarification:machine learning, deep learning and neural networks.

On the surface, it might seem like these terms are all just different ways of referring to artificial intelligence. In reality, these are more specific elements of AI as a whole.

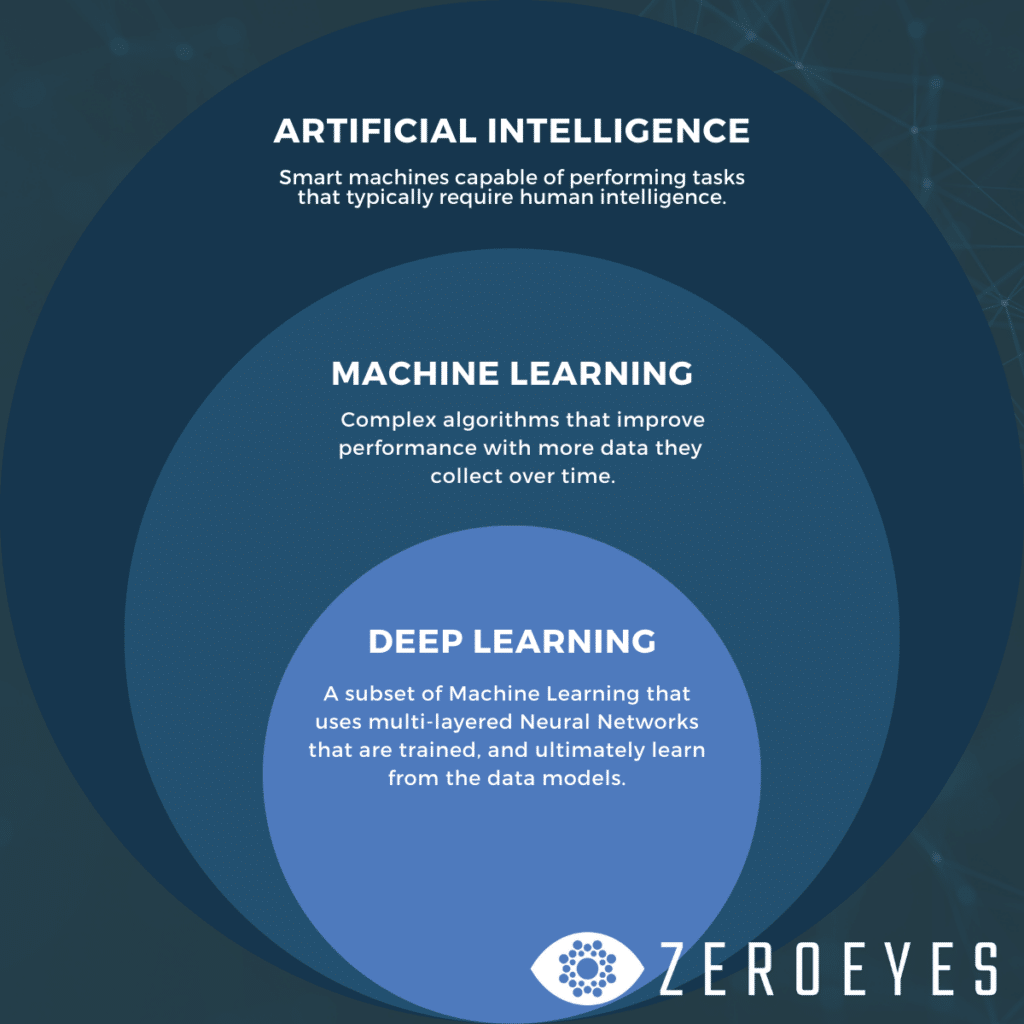

As we talked about in our previous post, AI is the science of creating machines that mimic the brainpower of human beings. Machine learning is a type of AI, deep learning is a type of machine learning, and neural networks make up the backbone of deep learning.

Machine learning is a type of AI, deep learning is a type of machine learning, and neural networks make up the backbone of deep learning.

Think of all of these things in terms of concentric circles, shown in the illustration below. All deep learning is machine learning, and all machine learning is AI,but all AI isn’t machine learning or deep learning. AI that’s purely reactive (like manufacturing robots) can’t learn on its own.

Machine Learning

Machine learning is a type of Limited Learning artificial intelligence that’s made up of algorithms that perform tasks better and better with exposure to more data over time.

Thanks to their complex algorithms, these machines “learn” or improve their problem-solving performance with each new experience they have, and with less human intervention (or programming).

Real-world examples:Speech recognition softwarelike Amazon’s Alexa and Google Home. These machines recognize patterns in speech, including intensities in time-frequency and tone, and “learn” over time to translate the speech patterns to text that it can use to perform functions (like ordering your groceries for delivery).

Another example: medical diagnosis.When doctors usespeech recognition and image scanning together, the machine learning algorithms help them determine similarities in symptoms and compare to databases of images for rare diseases – and even come up with treatment options.

Deep Learning & Neural Networks

Deep learning is a subset of machine learning. It uses neural networks that are trained, and ultimately learn, from the data models they’re given.

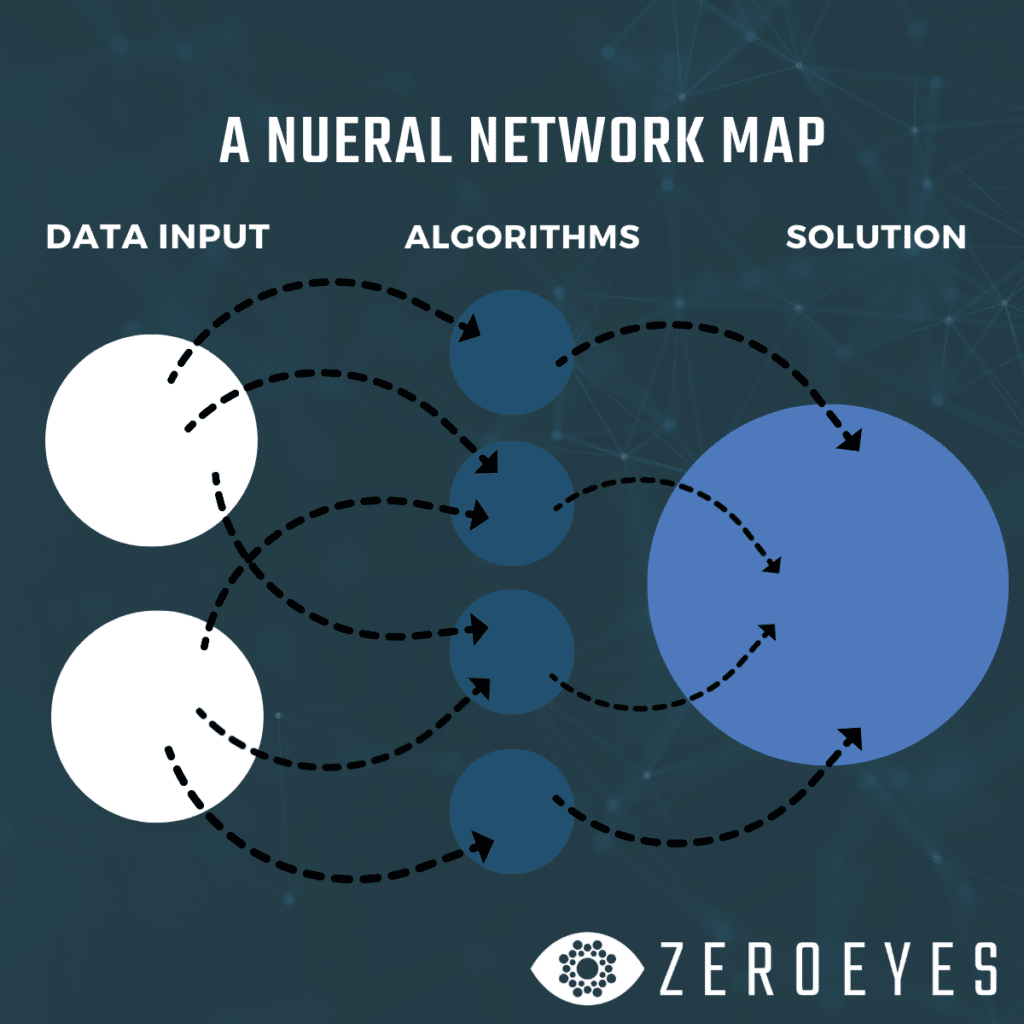

What’s a neural network? Simply put, it’s aseries of algorithms that takes input information and recognizes relationshipsbetween that data in a way that mimics how the human brain works. The networks “see” something, process the information and solve problems by cycling through their huge databases of information to find the best solution.

Through these algorithms, neural networks can adapt to the changing information they receive to give the best possible response. When you see an image of an interconnected web, like the one at the top of this blog post, that’s an illustration of a Neural Network – a web of synthetic neurons all working together to find a solution to a problem it faces. Just like the human brain.

Deep learning can, and in many cases does, use multiple layers of these neural networks to continuously improve its performance and learn from roadblocks it encounters.

It exists today in a wide range of software that can perform intelligent tasks even better than humans – for instance, in image classification. Deep learning AI has the ability to see and classify imageswith an accuracy over 95%, whereas humans classify images with an error rate of 5% or greater (since we’re only human).

While that margin of error is only growing smaller as the technology advances, deep learning machines are still prone to “false positives” and they still make mistakes. These mistakes are taken into account when incorporating new data that trains the model to avoid past false positives.

Deep Learning in the Real World

Real-world examples of deep learning AI used for object detection include Tesla’s self-driving technology, intruder detection on your Ring doorbell andZeroEyes’ DeepZeroTMgun detection technology.

Think about it this way: how can you tell if someone you see on a street is holding a gun? You see them, your brain processes that there’s a person, that they’re holding an object, and the size, shape, and color of the object match with what your brain knows a gun looks like. Processing that information takes your brain almost no time at all.

ZeroEyes designed the deep learning neural networks in its gun detection software to react the same way the human brain does – but with a military-trained human verification system in place to catch any false positives.

We train our neural network models to recognize hundreds of thousands of real-world images on different types of weapons, and what it looks like if someone is holding those weapons so it can accurately determine if a gun is present within a scene.

- The input data is the camera feed (from tens, hundreds or thousands of cameras)

- The network processes each stream and locates the gun if one is present

- The solution is detecting a match before the person in the frame becomes a threat

As with all machine learning, our DeepZeroTM technology constantly improves with each new update to the AI model it uses.

Our next blog post in this series will talk more about applying deep learning to security, using artificial intelligence to augment human intelligence, and the ethics of image recognition. Sign up for our newsletter for new posts sent directly to your inbox.

See ZeroEyes in Action

Experience how ZeroEyes can protect your organization. Schedule a personalized demo to see our AI-powered, Human-Driven gun detection technology in action and learn how we can help keep your people safe.